About

A character set is a repertoire of characters in which each character is (assigned|encoded) into a numeric code point.

An character set (as an alphabet) is any finite set of symbols (characters).

In other word, it is a mapping between Strings and bytes in order to convert Strings to bytes (and vice versa) as defined in the Encoding standard. Software uses it when retrieving/writing character information into a file.

Different encodings make trade-offs between the amount of storage required for a string and the speed of operations such as indexing into a string.

In computer science, the terms:

- charset,

- code set

- coded character set (CCS)

- character encoding,

- character map,

- character set

- coding representation

- or code page

are historically synonymous, as the same standard would specify a repertoire of characters and how they were to be encoded into a stream of byte (defined by code units) — usually with a single character per code unit.

The definition defined in the defined in RFC 2278 specifies that a charset is named mapping between sequences of sixteen-bit Unicode characters and sequences of bytes.

A (codepage|character encoding) is a:

- set of rules

- coding representation

- table of values

- encoding schemes

- list of selected character

used to represent a set of characters to their on-disk representation with code points.

Charset are usually defined to support specific languages or groups of languages that share common writing systems. For example, codepage 1253 provides character codes required in the Greek writing system and codepage 1250 provides the characters for Latin writing systems including English, German and French.

As a result of having many character encoding methods in use (and the need for backward compatibility with archived data), many computer programs must translate data between encoding schemes.

Articles Related

Decoder/Encoder

A decoder is an engine which transforms bytes in a specific charset into characters, and an encoder is an engine which transforms characters into bytes. Encoders and decoders operate on byte and character buffers. They are collectively referred to as coders.

Scheme

A character encoding scheme is a character encoding form plus byte serialization. There are seven character encoding schemes in Unicode: UTF-8, UTF-16, UTF-16BE, UTF-16LE, UTF-32, UTF-32BE, and UTF-32LE.

See

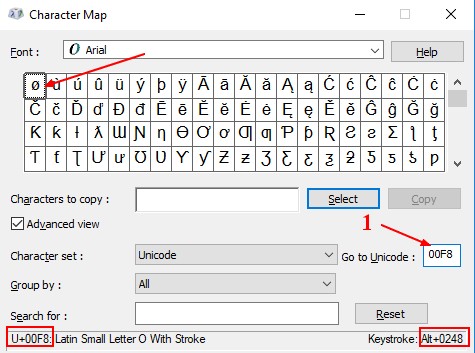

The character map of windows (only in UTF16)

Default

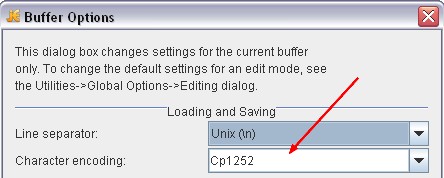

On Windows the default character encoding is Cp1252, on Unix it is usually UTF-8. For both of these encoding there are illegal byte sequences (more in UTF-8 than for Cp1252).

Order

See Text - Order

Common Charset

Unicode / UCS

See What is Unicode / Universal Coded Text Character Set (UCS)?

ASCII

See Character Set - American Standard Code for Information Interchange ( ASCII )

Example

In the ASCII encoding scheme for code page 850, for example:

- “A” is assigned code point X'41',

- and “B” is assigned code point X'42'.

Within a code page, each code point has only one specific meaning.

Single-byte, double-byte character sets and unicode

SBCS

DBCS

Multi-octet

DBCS disallowed the combining of say Japanese and Chinese in the same data stream, because depending on the code page the same double-byte code points represent different characters for the different languages.

In order to allow for the storage of different languages in the same data stream, Unicode was created. This one “codepage” can represent 64000+ characters and now with the introduction of surrogates it can represent 1,000,000,000+ characters.

Problems of code pages

Unicode is strongly recommended in modern applications, but many applications or data files still depend on the legacy code pages. This can cause many problems:

- Programs need to know what code page to use in order to display the contents of files correctly. If a program uses the wrong code page it may show text as mojibake.

- The code page in use may differ between machines, so files created on one machine may be unreadable on another.

- Data is often improperly tagged with the code page, or not tagged at all, making determination of the correct code page to read the data difficult.

- These Microsoft code pages differ to various degrees from some of the standards and other vendors' implementations. This isn't a Microsoft issue per-se as it happens to all vendors, but the lack of consistency makes interoperability with other systems unreliable in some cases

- The use of code pages limits the set of characters that may be used.

- Characters expressed in an unsupported code page may be converted to question marks (?) or other replacement characters, or to a simpler version (such as removing accents from a letter). In either case, the original character may be lost.

Set the encoding (locale)

As the charset is mandatory when reading or writing binary data, the (character set|code page) is always an application attribute (on the client side but also in the server side).

The encoding is set with what's known as the locale

Database

For instance when an application program connects to the database, the database manager determines the code page of the application.

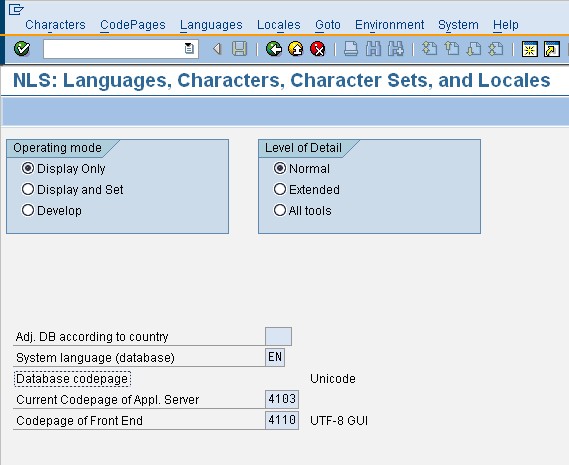

SAP

(national language support = NLS)

Windows

Application

Start > Control Panel > Regional and Language Options > Languages > Text service and Input Languages

Dos

To find the current console code page, issue the CHCP command in the Command Prompt window.

C:\Documents and Settings\Rixni>chcp

Page de codes active : 850

Linux

Html

File

Some editor have implemented a character set scanner that may tell you with which character set a file was saved.

For instance, in Jedit in Utilities > Buffer Options.

Detection

The encoding can be detecetd by checking the BOM (byte order mark) sequence. If no BOM is present, the utf-8 encoding is generally assumed.

- Java: ICU detection

Documentation / Reference

- Encoding, A. van Kesteren, J. Bell, A. Phillips. W3C.