Introduction

TPC-H is an ad-hoc, decision support benchmark.

It consists of a suite of business oriented ad-hoc queries and concurrent data modifications.

The TPC-H benchmark models the analysis end of the business environment where trends are computed and refined data are produced to support the making of sound business decisions.

This benchmark illustrates decision support systems that

- Examine large volumes of data;

- Execute queries with a high degree of complexity; (???)

- Give answers to critical business questions. (???)

To achieve the optimal compromise between performance and operational requirements, the database administrator can set, once and for all:

- the locking levels

- and the concurrent scheduling rules

for queries and refresh functions.

TPC-H does not represent the activity of any particular business segment, but rather any industry which must manage sell, or distribute a product worldwide (e.g., car rental, food distribution, parts, suppliers, etc.).

Articles Related

Benchmark

The TPC believes that comparisons of TPC-H results measured against different database sizes are misleading and discourages such comparisons.

The only benchmark results comparable to TPC-H are other TPC-H results compliant with the same revision.

Benchmark results are highly dependent upon workload, specific application requirements, and systems design and implementation.

Relative system performance will vary as a result of these and other factors. Therefore, TPC-H should not be used as a substitute for a specific customer application benchmarking when critical capacity planning and/or product evaluation decisions are contemplated.

Rules

- The physical clustering of records within the database is allowed as long as this clustering does not alter the logical independence of each table.

- Horizontal partitioning of base tables or auxiliary structures created by database directives is allowed. Groups of rows from a table or auxiliary structure may be assigned to different files, disks, or areas.

Partitioning schemes rules. They:

- may not rely on any knowledge of the data stored in the table except the minimum and maximum values of columns used for the partitioning field.

- must allow the insertion of values of the partitioning column(s) outside the range covered by the minimum and maximum values

- can partition on multiple-level

Partition schemes key rules:

- A partitioning field must be one and only one of the columns listed

- For integer-based partition, the partitioning schemes must define each partition to accept an equal portion of the range between the minimum and maximum values of the partitioning column(s).

- For date-based partitions, it is permissible to partition into equally sized domains based upon an integer granularity of days, weeks, months, or years (e.g., 30 days, 4 weeks, 1 month, 1 year, etc.). For date-based partition granularities other than days, a partition boundary may extend beyond the minimum or maximum boundaries.

Metrics

Performance - QphH@Size

The performance metric reported by TPC-H is called the TPC-H Composite Query-per-Hour Performance Metric (QphH@Size), and reflects multiple aspects of the capability of the system to process queries including:

- the selected database size against which the queries are executed,

- the query processing power when queries are submitted by a single stream

- and the query throughput when queries are submitted by multiple concurrent users.

Price/Performance - $/QphH@Size

The TPC-H Price/Performance metric is expressed as $/QphH@Size.

Artifacts

The tpc-h leverages:

- a database model

- queries

- and refresh functions (update)

A mix of queries and refresh functions is subject to specific ACIDity requirements, since queries and refresh functions may execute concurrently;

Query

These queries have been given a realistic context, portraying the activity of a wiki/wholesale supplier.

The queries that have been selected exhibit the following characteristics:

- They have a high degree of complexity;

- They use a variety of access

- They are of an ad-hoc nature;

- They examine a large percentage of the available data;

- They all differ from each other;

- They contain query parameters that change across query executions.

These selected queries provide answers to the following classes of business analysis:

- Pricing and promotions;

- Supply and demand management;

- Profit and revenue management;

- Customer satisfaction study;

- Market share study;

- Shipping management.

QGEN is a utility provided by the TPC to generate executable query text with substitution parameters. The substitution parameters are created randomly. If a tool is created instead of QGEN, the behavior of its seeds must be satisfied.

Refresh function

Their purpose of the refresh function is to demonstrate the update functionality for the DBMS, while simultaneously assessing an appropriate performance cost to the maintenance of auxiliary data structures, such as secondary indices.

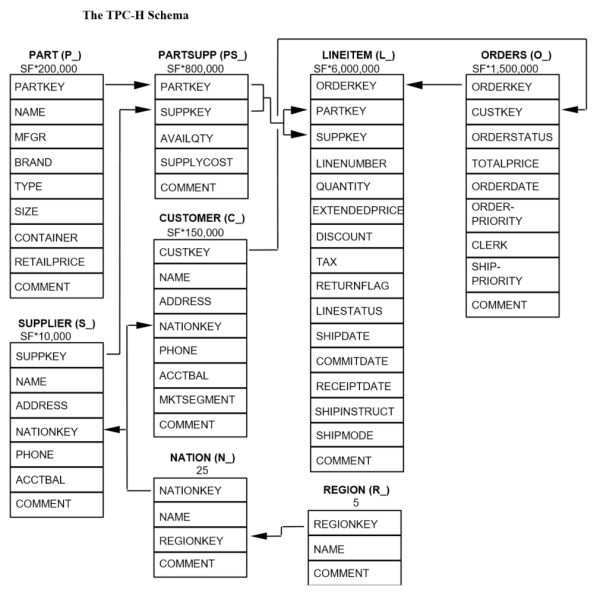

Database Schema

where:

- The parentheses following each table name contain the prefix of the column names for that table;

- The arrows point in the direction of the one-to-many relationships between tables;

- The number/formula below each table name represents the cardinality (number of rows) of the table. Some are factored by SF, the Scale Factor, to obtain the chosen database size. The cardinality for the LINEITEM table is approximate.

Datatype

The following datatype definitions apply to the list of columns of each table:

- Identifier means that the column must be able to hold any key value generated for that column and be able to support at least 2,147,483,647 unique values; A common implementation of this datatype will be an integer.

- Integer: means that the column must be able to exactly represent integer values (i.e., values in increments of 1) in the range of at least -2,147,483,646 to 2,147,483,647.

- Decimal: means that the column must be able to represent values in the range -9,999,999,999.99 to +9,999,999,999.99 in increments of 0.01; the values can be either represented exactly or interpreted to be in this range;

- Big Decimal is of the Decimal datatype as defined above, with the additional property that it must be large enough to represent the aggregated values stored in temporary tables created within query variants;

- Fixed text, size N means that the column must be able to hold any string of characters of a fixed length of N. If the string it holds is shorter than N characters, then trailing spaces must be stored in the database or the database must automatically pad with spaces upon retrieval such that a CHAR_LENGTH() function will return N.

- Variable text, size N means that the column must be able to hold any string of characters of a variable length with a maximum length of N.

- Date is a value whose external representation can be expressed as YYYY-MM-DD, where all characters are numeric. A date must be able to express any day within at least 14 consecutive years.

All the data type by column are defined in the documentation. A default SQL schema file dss.ddl is also available in the dbgen dir of the toolkit.

Constraints

The use of constraints is optional and limited to primary key, foreign key, check, and not null constraints.

- All defined constraints must be enforced and validated before the load test is complete

- Constraints must be enforced either at the statement level or at the transaction level

- The NOT NULL attribute may be used for any column.

- Defining a primary key constraint can only be done for the columns listed.

- Columns listed as ‘Foreign Key’ may be defined as foreign key constraints.

- Check Constraints: Check constraints may be defined to restrict the database contents. In order to support evolutionary change, the check constraints must not rely on knowledge of the enumerated domains of each column.

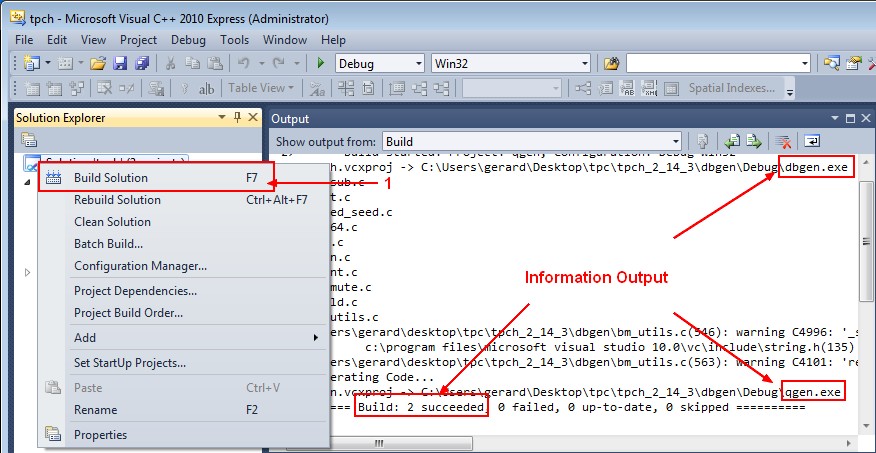

Building the generator

ANSI C++ Compiler

on Windows

Compiler

Download and Install Microsoft Visual C++ Express

makefile

- Copy the file makefile.suite to makefile

- Change the following values

################

## CHANGE NAME OF ANSI COMPILER HERE

################

CC = CL

# Current values for DATABASE are: INFORMIX, DB2, TDAT (Teradata)

# SQLSERVER, SYBASE, ORACLE, VECTORWISE

# Current values for MACHINE are: ATT, DOS, HP, IBM, ICL, MVS,

# SGI, SUN, U2200, VMS, LINUX, WIN32

# Current values for WORKLOAD are: TPCH

DATABASE= ORACLE

MACHINE = WIN32

WORKLOAD = TPCH

#

CFLAGS = /DEBUG /DDBNAME=\"dss\" /D$(MACHINE) /D$(DATABASE) /D$(WORKLOAD) /DRNG_TEST /D_FILE_OFFSET_BITS=64

LDFLAGS = /O2

# The OBJ,EXE and LIB macros will need to be changed for compilation under

# Windows NT

OBJ = .obj

EXE = .EXE

LIBS =

where:

- CC is the executable compiler

- CFLAGS are option to compile the code. Compiler Options Listed Alphabetically

- LDFLAGS is for the linking stage. Linker option of CL

Nmake

- Open a command dos

- Add the bin directory of Visual Studio to the PATH

SET PATH=%PATH%;C:\Program Files\Microsoft Visual Studio 10.0\VC\bin\

- Set up the environment variable. The LIB environement variable is set and is then not needed in the makefile

echo %LIB%

%LIB%

vcvars32.bat

Setting environment for using Microsoft Visual Studio 2010 x86 tools.

echo %LIB%

C:\Program Files\Microsoft Visual Studio 10.0\VC\LIB;C:\Program Files\Microsoft SDKs\Windows\v7.0A\lib;

- Go to the unzipped tpch_2_14_3 directory and call the nmake executable

cd C:\Users\gerard\Desktop\tpc\tpch_2_14_3\dbgen

nmake /f makefile

Microsoft (R) Program Maintenance Utility Version 10.00.30319.01

Copyright (C) Microsoft Corporation. All rights reserved.

CL /DEBUG /DDBNAME=\"dss\" /DWIN32 /DORACLE /DTPCH /DRNG_TEST /D_FILE_OFFSET_BITS=64 /c build.c driver.c bm_util

s.c rnd.c print.c load_stub.c bcd2.c speed_seed.c text.c permute.c rng64.c

Microsoft (R) 32-bit C/C++ Optimizing Compiler Version 16.00.30319.01 for 80x86

Copyright (C) Microsoft Corporation. All rights reserved.

build.c

driver.c

bm_utils.c

rnd.c

print.c

load_stub.c

bcd2.c

speed_seed.c

text.c

permute.c

rng64.c

Generating Code...

CL /DEBUG /DDBNAME=\"dss\" /DWIN32 /DORACLE /DTPCH /DRNG_TEST /D_FILE_OFFSET_BITS=64 /O2 -o dbgen.EXE build.obj

driver.obj bm_utils.obj rnd.obj print.obj load_stub.obj bcd2.obj speed_seed.obj text.obj permute.obj rng64.obj

Microsoft (R) 32-bit C/C++ Optimizing Compiler Version 16.00.30319.01 for 80x86

Copyright (C) Microsoft Corporation. All rights reserved.

cl : Command line warning D9035 : option 'o' has been deprecated and will be removed in a future release

Microsoft (R) Incremental Linker Version 10.00.30319.01

Copyright (C) Microsoft Corporation. All rights reserved.

/out:build.exe

/out:dbgen.EXE

build.obj

driver.obj

bm_utils.obj

rnd.obj

print.obj

load_stub.obj

bcd2.obj

speed_seed.obj

text.obj

permute.obj

rng64.obj

CL /DEBUG /DDBNAME=\"dss\" /DWIN32 /DORACLE /DTPCH /DRNG_TEST /D_FILE_OFFSET_BITS=64 /c qgen.c varsub.c

Microsoft (R) 32-bit C/C++ Optimizing Compiler Version 16.00.30319.01 for 80x86

Copyright (C) Microsoft Corporation. All rights reserved.

qgen.c

varsub.c

Generating Code...

CL /DEBUG /DDBNAME=\"dss\" /DWIN32 /DORACLE /DTPCH /DRNG_TEST /D_FILE_OFFSET_BITS=64 /O2 -o qgen.EXE build.obj b

m_utils.obj qgen.obj rnd.obj varsub.obj text.obj bcd2.obj permute.obj speed_seed.obj rng64.obj

Microsoft (R) 32-bit C/C++ Optimizing Compiler Version 16.00.30319.01 for 80x86

Copyright (C) Microsoft Corporation. All rights reserved.

cl : Command line warning D9035 : option 'o' has been deprecated and will be removed in a future release

Microsoft (R) Incremental Linker Version 10.00.30319.01

Copyright (C) Microsoft Corporation. All rights reserved.

/out:build.exe

/out:qgen.EXE

build.obj

bm_utils.obj

qgen.obj

rnd.obj

varsub.obj

text.obj

bcd2.obj

permute.obj

speed_seed.obj

rng64.obj

On Linux

Makefile

################

## CHANGE NAME OF ANSI COMPILER HERE

################

CC = GCC

# Current values for DATABASE are: INFORMIX, DB2, TDAT (Teradata)

# SQLSERVER, SYBASE, ORACLE, VECTORWISE

# Current values for MACHINE are: ATT, DOS, HP, IBM, ICL, MVS,

# SGI, SUN, U2200, VMS, LINUX, WIN32

# Current values for WORKLOAD are: TPCH

DATABASE= ORACLE

MACHINE = LINUX

WORKLOAD = TPCH

#

CFLAGS = -g -DDBNAME=\"dss\" -D$(MACHINE) -D$(DATABASE) -D$(WORKLOAD) -DRNG_TEST -D_FILE_OFFSET_BITS=64

LDFLAGS = -O

# The OBJ,EXE and LIB macros will need to be changed for compilation under

# Windows NT

OBJ = .o

EXE =

LIBS = -lm

where the following GCC Options:

- -g Produce debugging information in the operating system's native format (stabs, COFF, XCOFF, or DWARF 2).

- -O, the compiler tries to reduce code size and execution time

make

make

Testing the application

dbgen.exe -h

TPC-H Population Generator (Version 2.14.3 build 0)

Copyright Transaction Processing Performance Council 1994 - 2010

USAGE:

dbgen [-{vf}][-T {pcsoPSOL}]

[-s <scale>][-C <procs>][-S <step>]

dbgen [-v] [-O m] [-s <scale>] [-U <updates>]

Basic Options

===========================

-C <n> -- separate data set into <n> chunks (requires -S, default: 1)

-f -- force. Overwrite existing files

-h -- display this message

-q -- enable QUIET mode

-s <n> -- set Scale Factor (SF) to <n> (default: 1)

-S <n> -- build the <n>th step of the data/update set (used with -C or -U)

-U <n> -- generate <n> update sets

-v -- enable VERBOSE mode

Advanced Options

===========================

-b <s> -- load distributions for <s> (default: dists.dss)

-d <n> -- split deletes between <n> files (requires -U)

-i <n> -- split inserts between <n> files (requires -U)

-T c -- generate cutomers ONLY

-T l -- generate nation/region ONLY

-T L -- generate lineitem ONLY

-T n -- generate nation ONLY

-T o -- generate orders/lineitem ONLY

-T O -- generate orders ONLY

-T p -- generate parts/partsupp ONLY

-T P -- generate parts ONLY

-T r -- generate region ONLY

-T s -- generate suppliers ONLY

-T S -- generate partsupp ONLY

To generate the SF=1 (1GB), validation database population, use:

dbgen -vf -s 1

To generate updates for a SF=1 (1GB), use:

dbgen -v -U 1 -s 1

qgen.EXE -h

TPC-H Parameter Substitution (v. 2.14.3 build 0)

Copyright Transaction Processing Performance Council 1994 - 2010

USAGE: qgen.EXE <options> [ queries ]

Options:

-a -- use ANSI semantics.

-b <str> -- load distributions from <str>

-c -- retain comments found in template.

-d -- use default substitution values.

-h -- print this usage summary.

-i <str> -- use the contents of file <str> to begin a query.

-l <str> -- log parameters to <str>.

-n <str> -- connect to database <str>.

-N -- use default rowcounts and ignore :n directive.

-o <str> -- set the output file base path to <str>.

-p <n> -- use the query permutation for stream <n>

-r <n> -- seed the random number generator with <n>

-s <n> -- base substitutions on an SF of <n>

-v -- verbose.

-t <str> -- use the contents of file <str> to complete a query

-x -- enable SET EXPLAIN in each query.

Generating the data

Scale factors

Scale factors used for the test database must be chosen from the set of fixed scale factors defined as follows:

| Scale factor (SF) | 1 | 10 | 30 | 100 | 300 | 1000 | 3000 | 10000 | 30000 | 100000 |

|---|---|---|---|---|---|---|---|---|---|---|

| Database sizes | 1GB | 10GB | 30GB | 100GB | 300GB | 1000GB | 3000GB | 10000GB | 30000GB | 100000GB |

where:

- GB stands for gigabyte, defined to be 2^30 bytes.

Dbgen

- Copy Dbgen to the root directory (tpch_2_16_0\tpch_2_15_0\dbgen)

- and start the following command to generate 1GB:

dbgen -vf -s 1

TPC-H Population Generator (Version 2.14.3)

Copyright Transaction Processing Performance Council 1994 - 2010

Generating data for suppliers table/

Preloading text ... 100%

done.

Generating data for customers tabledone.

Generating data for orders/lineitem tablesdone.

Generating data for part/partsupplier tablesdone.

Generating data for nation tabledone.

Generating data for region tabledone.

Java Port: https://github.com/airlift/tpch

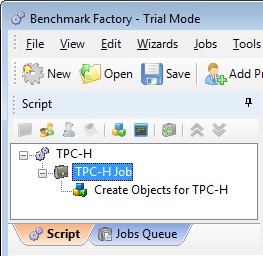

With Benchmark factory

Creation of the TPC_H user

CREATE USER TPC_H IDENTIFIED BY TPC_H

DEFAULT tablespace users

TEMPORARY tablespace temp

quota unlimited ON users;

GRANT CONNECT, resource, CREATE VIEW TO TPC_H;

Load Scenario

- Create a Load scenario to create only the object:

- Right Click and submit a job

Summary

.............

Loading table H_Lineitem ( 5997000 (99,95%) rows completed )

Loading table H_Lineitem ( 5998000 (99,97%) rows completed )

Completed loading table H_Lineitem.

Create Indexes

Test completed successfully.

** Job finished.

Total Time to perform the job:

Start Time: 5-4-2012 16:27:53

End Time : 5-4-2012 16:32:49

Elapsed : 0 Days 00:04:55.775

Library

- https://github.com/airlift/tpch/ - Java port

Support

Open failed for .\dists.dss

TPC-H Population Generator (Version 2.14.3)

Copyright Transaction Processing Performance Council 1994 - 2010

Open failed for .\dists.dss at c:\users\gerard\desktop\tpc\tpch_2_14_3\dbgen\bm_utils.c:308

By default, the generator use the b option and search the default file dists.dss. Try to use the -h operator.

fatal error U1001: syntax error : illegal character '{'

C:\Users\gerard\Desktop\tpc\tpch_2_14_3\dbgen>nmake /f makefile

Microsoft (R) Program Maintenance Utility Version 10.00.30319.01

Copyright (C) Microsoft Corporation. All rights reserved.

makefile(127) : fatal error U1001: syntax error : illegal character '{' in macro

Stop.

In the makefile, replace:

- { with (

- } with (

Just for info

- Open the file: tpch_2_14_3\dbgen\tpch.sln

- Right the Solution 'tpch'(2 projects) and choose the “Build Solution” option.